Get the latest tech news

Deploying DeepSeek on 96 H100 GPUs

<p>DeepSeek is a popular open-source large language model (LLM) praised for its strong performance. However, its large size and unique architecture, which us...

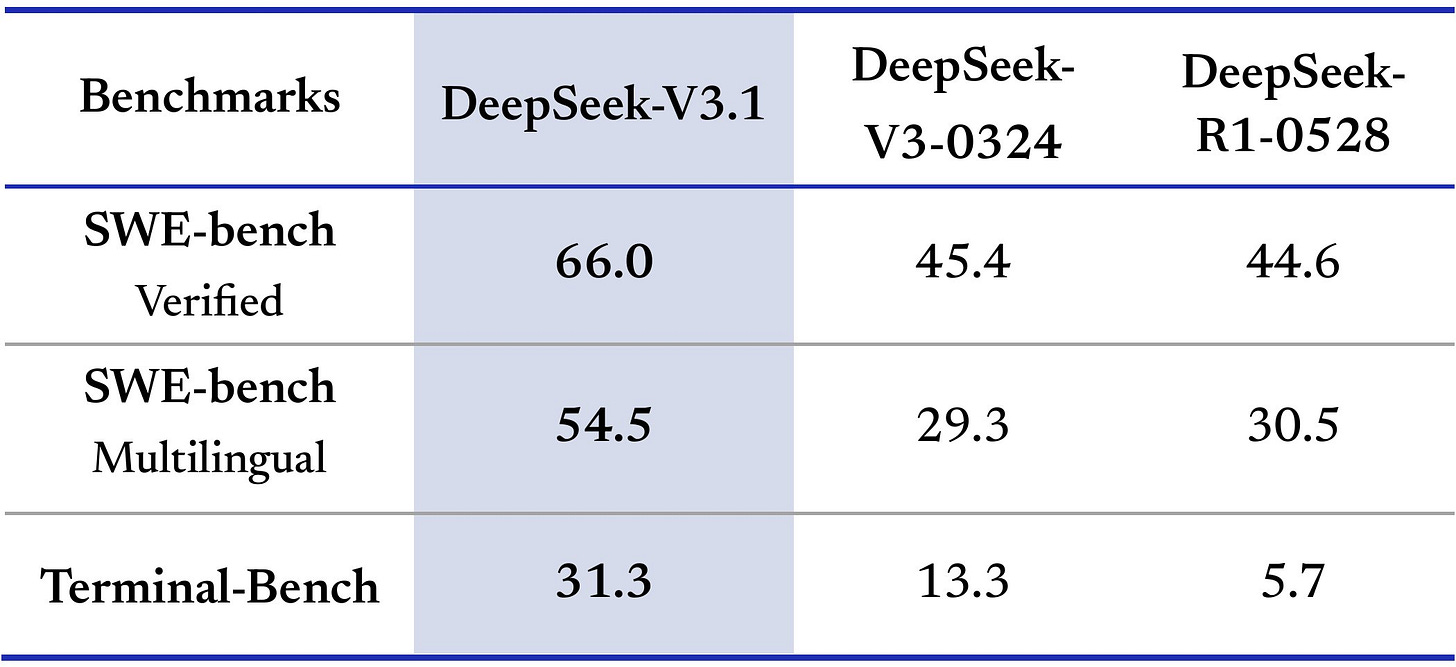

Enhanced Scalability: With an intermediate dimension of 18,432, high TP degrees (e.g., TP32) result in inefficient fragmentation into small-unit segments (e.g., 576 units), which are not divisible by 128—a common alignment boundary for modern GPUs such as H100. As shown in the figure on the right, these performance gains are largely attributed to 4× larger batch sizes enabled by EP, which enhances scalability by significantly reducing per-GPU memory consumption of model weights. Atlas Cloud Team — Jerry Tang, Wei Xu, Simon Xue, Harry He, Eva Ma, and colleagues — for providing a 96-device NVIDIA H100 cluster and offering responsive engineering support.

Or read this on Hacker News