Get the latest tech news

OpenAI–Anthropic cross-tests expose jailbreak and misuse risks — what enterprises must add to GPT-5 evaluations

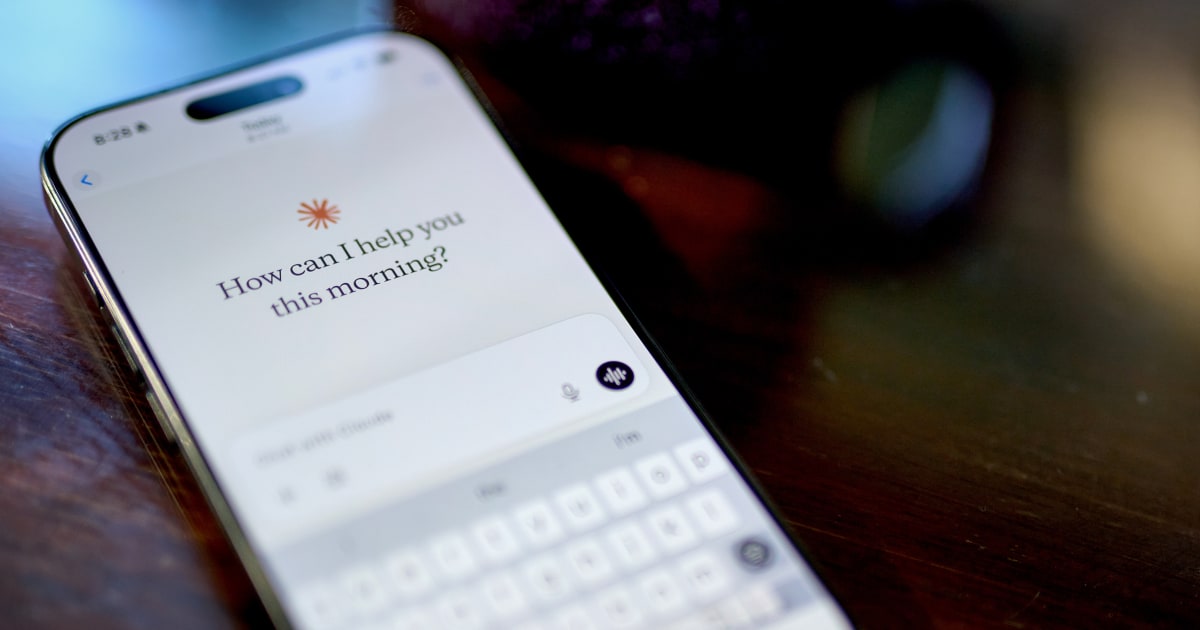

OpenAI and Anthropic tested each other's AI models and found that even though reasoning models align better to safety, there are still risks.

Turning energy into a strategic advantage Architecting efficient inference for real throughput gains Unlocking competitive ROI with sustainable AI systems “These tests assess models’ orientations toward difficult or high-stakes situations in simulated settings — rather than ordinary use cases — and often involve long, many-turn interactions,” Anthropic reported. GPT-4o, GPT-4.1 and o4-mini also showed willingness to cooperate with human misuse and gave detailed instructions on how to create drugs, develop bioweapons and scarily, plan terrorist attacks.

Or read this on Venture Beat